This article traces the 5,000-year journey of computing, highlighting key innovations and thinkers that transformed simple counting tools into the powerful digital systems we rely on today.

Early Foundations and Mechanical Beginnings

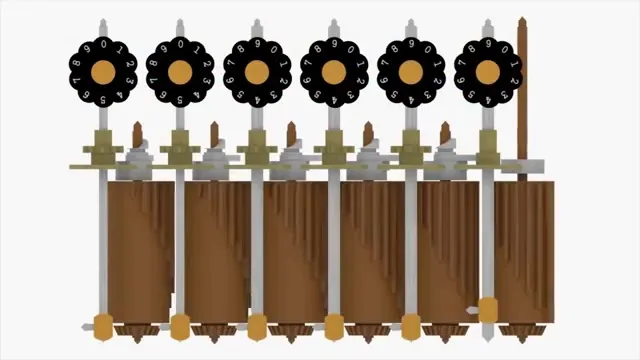

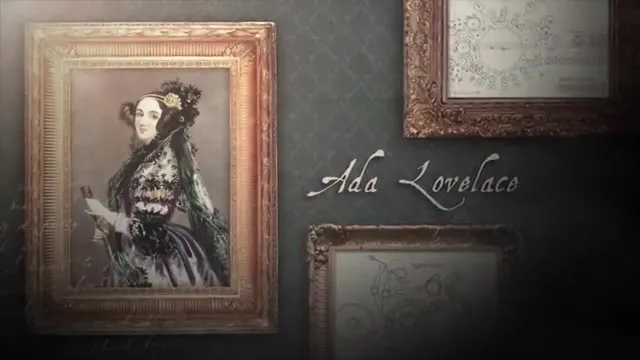

•The abacus (3000 BC) was among the first counting machines, evolving into Blaise Pascal's mechanical adding machine (1642).•Gottfried Leibniz introduced binary arithmetic concepts and a four-operation calculator in the 1600s, laying groundwork for future computing.•Charles Babbage designed the Difference Engine (1820) and Analytical Engine (1830), envisioning programmable mechanical computers, with Ada Lovelace contributing early programming algorithms.The Digital Revolution and Modern Computing

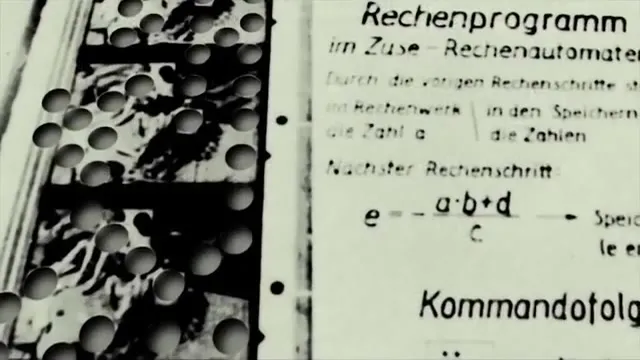

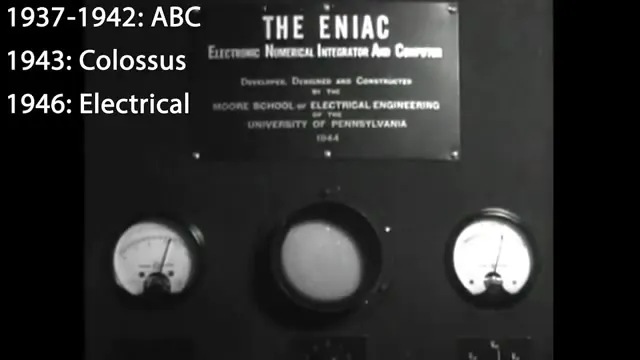

•Alan Turing's universal machine concept (1936) and Konrad Zuse's first programmable computer using binary and boolean logic revolutionized computing theory.•Vacuum tube computers like the ABC (1942) and ENIAC (1946) enabled high-speed digital processing, while John von Neumann's stored-program concept (1945) shaped modern computer architecture.•Transistors (1947) and integrated circuits (1958) miniaturized technology, boosting performance and leading to innovations like RAM, hard drives, and programming languages such as Fortran and C.Key Takeaways

•Computing evolved over millennia through incremental contributions, from ancient tools like the abacus to mechanical calculators and early digital machines.•Key breakthroughs included binary arithmetic, programmable designs, vacuum tubes, and transistors, which enabled faster, smaller, and more reliable computers.•Innovations in hardware (e.g., integrated circuits) and software (e.g., compilers and high-level languages) drove exponential growth, encapsulated by Moore's Law predicting ongoing advancement.Conclusion

The history of computing demonstrates how collaborative innovation across centuries has accelerated technological progress, shaping the digital world we inhabit today.